Adding AI-generated image description to Ice Cubes

A little story on why and how I did it

I’ve recently released a new feature for Ice Cubes, and users have loved it! On Mastodon, it’s considered an excellent ethic to add media descriptions when posting medias on the network. It’s necessary for visually impaired people but also for anyone who would want to get more detail about the image.

If you’re unfamiliar with Ice Cubes, you can read my introduction story about it; it’s an open-source Mastodon client.

Here are some user feedback and a demo of the feature:

And I’m guilty, I’m guilty of not taking the time to add them, or sometimes being too lazy or forgetting about it.

Well, no more. Now, when uploading photos within Ice Cubes, can you, in one tap, use the new OpenAI Vision API to get a description of the image.

I wanted to write about how I technically did it, as it can look like magic, but it’s not. It’s actually just a few lines of SwiftUI, a bit of networking, and the use of one OpenAI API.

Let’s get started with your OpenAI client. In Ice Cubes I started to have OpenAI integration before anyone made a third-party Swift OpenAI client, so I’ve my own simple client in the app. You can find the complete code of the client here

But to make it simpler, I’ll paste a condensed version here with just what you need for the Vision API

import Foundation

protocol OpenAIRequest: Encodable {

var path: String { get }

var model: String { get }

}

extension OpenAIRequest {

var path: String {

"chat/completions"

}

}

public struct OpenAIClient {

private let endpoint: URL = .init(string: "https://api.openai.com/v1/")!

private var APIKey: String {

'your API Key'

}

private var authorizationHeaderValue: String {

"Bearer \(APIKey)"

}

private var encoder: JSONEncoder {

let encoder = JSONEncoder()

encoder.keyEncodingStrategy = .convertToSnakeCase

return encoder

}

private var decoder: JSONDecoder {

let decoder = JSONDecoder()

decoder.keyDecodingStrategy = .convertFromSnakeCase

return decoder

}

public struct VisionRequest: OpenAIRequest {

public struct Message: Encodable {

public struct MessageContent: Encodable {

public struct ImageUrl: Encodable {

public let url: URL

}

public let type: String

public let text: String?

public let imageUrl: ImageUrl?

}

public let role = "user"

public let content: [MessageContent]

}

let model = "gpt-4-vision-preview"

let messages: [Message]

let maxTokens = 50

}

public enum Prompt {

case imageDescription(image: URL)

var request: OpenAIRequest {

switch self {

case let .imageDescription(image):

VisionRequest(messages: [.init(content: [.init(type: "text", text: "What’s in this image? Be brief, it's for image alt description on a social network. Don't write in the first person.", imageUrl: nil)

, .init(type: "image_url", text: nil, imageUrl: .init(url: image))])])

}

}

}

public struct Response: Decodable {

public struct Choice: Decodable {

public struct Message: Decodable {

public let role: String

public let content: String

}

public let message: Message?

}

public let choices: [Choice]

}

public init() {}

public func request(_ prompt: Prompt) async throws -> Response {

do {

let jsonData = try encoder.encode(prompt.request)

var request = URLRequest(url: endpoint.appending(path: prompt.request.path))

request.httpMethod = "POST"

request.setValue(authorizationHeaderValue, forHTTPHeaderField: "Authorization")

request.setValue("application/json", forHTTPHeaderField: "Content-Type")

request.httpBody = jsonData

let (result, _) = try await URLSession.shared.data(for: request)

let response = try decoder.decode(Response.self, from: result)

return response

} catch {

throw error

}

}

}Here, we’re defining our request and response, one Encodable and the other Decoable. Then, we use URLRequest and URLSession with a sprinkle of Swift concurrency to send the query to the OpenAI API.

For the part about the API Key, it’s not recommended to put it directly in your app, as it means it’s freely available for anyone to find and exploit.

The most exciting part of this code is probably the prompt.

The prompt to the VisionAPI is first the request and then the URL to your image. It’s two messages.

What’s in this image? Be brief, it’s for image alt description on a social network. Don’t write in the first person.

This is probably what took me the longest when making this feature. I’ve tried various prompts, by default GPT is very verbose and talks in the first person. I found out that with a max limit of 50 tokens and this prompt, I got very good results. The image description is short and concise and looks like a human could write naturally. This is what shipped in the app update. I’ll probably continue to refine it later.

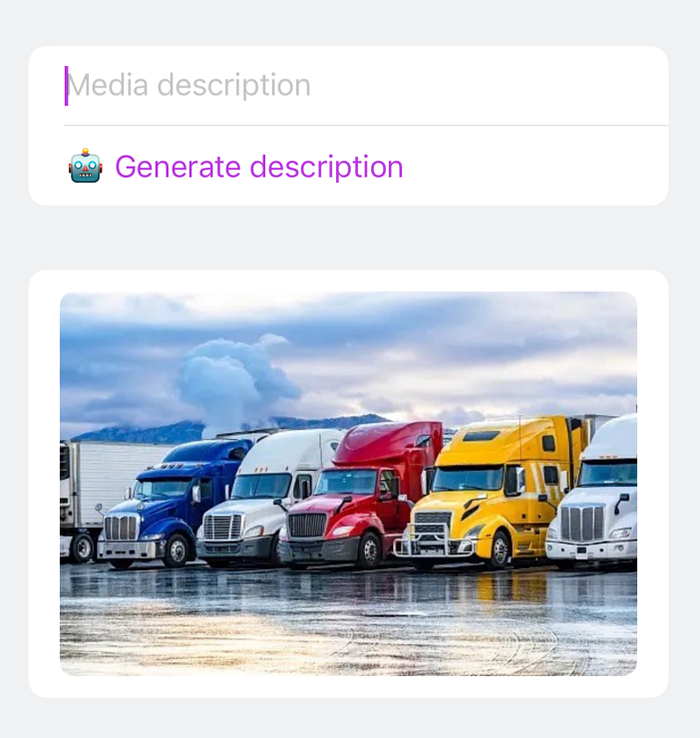

The next part is the UI, a simple form with a TextField where the user can input their own description and a button below to use the AI to generate one. Then, the image the user uploaded from the post-composer.

Here is the code. It is nothing fancy apart from a few loading state and the call to our OpenAI client.

Form {

Section {

TextField("status.editor.media.image-description",

text: $imageDescription, axis: .vertical)

.focused($isFieldFocused)

generateButton

}

Section {

AsyncImage( ... )

}

}

@ViewBuilder

private var generateButton: some View {

if let url = container.mediaAttachment?.url, preferences.isOpenAIEnabled {

Button {

Task {

imageDescription = description

}

} label: {

if isGeneratingDescription {

ProgressView()

} else {

Text("Generate description")

}

}

}

}

private func generateDescription(url: URL) async -> String? {

isGeneratingDescription = true

let client = OpenAIClient()

let response = try? await client.request(.imageDescription(image: url))

isGeneratingDescription = false

return response?.trimmedText

}And that’s it. It’s nothing crazy, I’m just using modern SwiftUI and the available AI API out there!

You can find the full Ice Cubes code here

Download the app on the App Store!

Happy coding! 🤖